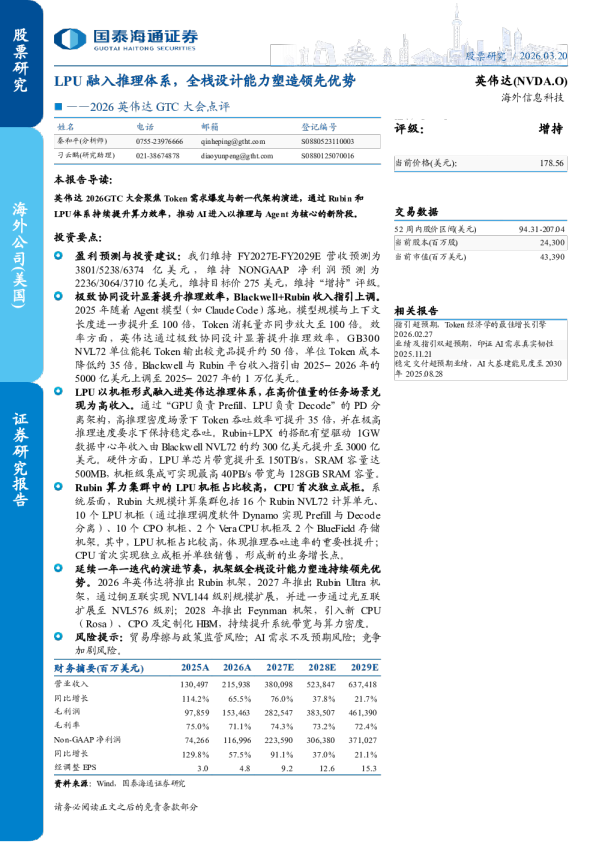

GTC 2026:英伟达引领2027年更强劲增长

AI semi & tech EQUITY: TECHNOLOGY GTC 2026: nVidia guiding a stronger 2027 Research Analysts Asia Technology LPU boosts inferencing; pluggable/CPO and copper allgrowing; 2027 capex/FCF debates; ongoing shortages Aaron Jeng, CFA - NITBaaron.jeng@nomura.com+886(2) 21769962 Our key takeaways from nVidia GTC 2026 Anne Lee, CFA - NITBanne.lee@nomura.com+886(2) 21769966 nVidia (NVDA US, Not rated) hosted its annual GPU Technology Conference (GTC) on 16March (11am PT). Founder and CEO Jensen Huang delivered the keynote speechhighlighting key topics, including: (1) an inference inflection point and the vast computingdemand, which he noted would lead its AI chip revenues to exceed USD1tn through 2025-2027E (Fig. 3); (2) updates on nVidia’s next-generation AI GPU platforms Vera Rubin,Rubin Ultra, and Feynman, including major announcements on Groq 3 (unlisted) languageplatform unit (LPU) chip/LPX rack, and the adoption of co-packaged optics (CPO) scale-up starting from the Feynman generation; (3) the collaboration with OpenClaw to foundagentic platform NemoClaw for the OpenClaw agent platform. Other discussions spannedfrom space computing power, autonomous driving platform, to developments in physicalAI/robotics (replay,news). CW Chung - NIHKcwchung@nomura.com+852 2252 6075 Donnie Teng - NIHKdonnie.teng@nomura.com+852 2252 1439 Bing Duan - NIHKbing.duan1@nomura.com+852 2252 2141 Kenny Chen - NITBkenny.chen@nomura.com+886 2 21769978 Our quick thoughts after the event: nVidia’s messages indicate a stronger AI chip revenue opportunity in 2027 (USD150-200bnmore than for 2026,Fig. 3) – which still looks to be supported by top 5 hyperscalers’consensus FCF (before their aggregated FCF turns negative). That said, hyperscalers’sharply falling FCF could still fuel a debate about capex sustainability. Investors might lookfor AI monetization to happen more meaningfully from 2026, in our view. nVidia’s bullish tone also explains why the shortage/supply constraints in the Asia hardwaresupply chain through 2026 have been/would be so severe (and recall “supply constraint”has been the key reason behindour bullish AI semi and hardware callback in 4Q25 whenthe Street was concerned about an AI bubble), in our view. We expect earnings upwardrevisions for Asia AI semi and hardware supply chain names to continue following the GTC. (1) GPUs:We do not expect Rubin GPU production to ramp up significantly until 4Q26F,which implies that actual rack shipments could be even later. One of the bottlenecks is theHBM4 schedule, which looks to be challenging as the progress at SK Hynix (000660 KS,Buy) has been underwhelming, while Samsung‘s (005930 KS, Buy) yield rate is not yet high.Thus, nVidia may still rely heavily on Blackwell GPU (which uses HBM3E) sales this year.We expect Feynman to adopt the SoIC technology for the first time, similar to AMD’s (AMDUS, Not rated) MI series. Our initial impression is that it will be 2x bottom dies and 4x topdies combined as compute tiles, along with 4x I/O dies and 12x HBM4E side by side. (2) LPUs/LPX rack:LPX, which consists of 32 LPU trays with 8 LPUs per tray, is anaccelerator which is required to be paired with VR racks (Fig. 7 andFig. 8). Samsung is currently the sole supplier of LPU, which does not require CoWoSpackaging. We think nVidia will mass-produce LPUs through Samsung Electronics’(SEC) 4nm foundry process and commence using from 2H26. SEC’s 4nm processhas a stable yield rate prior to usage of gate-all-around (GAA), and currently hasdemand exceeding capacity. In our view, this is a meaningful customer win for SECfollowing 2nm (GAA) Exynos 2600, although revenue in 2027F would likely be onlyaround USD3bn (compared to memory OP of more than USD200bn). 4nm-processcapacity is facing concentrated demand for HBM base dies and customers, includingAMD, Baidu (BIDU US, Buy) and Qualcomm (QCOM US, Not rated), and we believethat SEC might leverage its meaningful experience in mass-producing various chipsthrough 4nm capacity to improve 3nm and 2nm processes going forward. In addition,in case the company proactively invests in the foundry business based on sizable• Production Complete: 2026-03-17 17:25 UTC income from its memory division, we believe the gap between SEC and TSMC (2330TT, Buy) can be narrowed. LPU is a logic chip developed by Groq, in which nVidia invests. LPU exhibits highperformance for certain inferencing based on large on-chip SRAM size. While GPUhas high reliance on HBM and is applicable for general purposes, LPU has highreliance on SRAM and provides high performance/very low latency for specificinference workloads. Google’s (GOOGL US, Not rated) TPU has characteristics thatcome between GPU and LPU. We think this is part of the megatrends in whichvarious logics (GPU, TPU, LPU etc.) and various memories working for different rolesand functions for overall AI server systems pursue higher TCO amid a growing trendtoward inferencing. We think the impact on HBM looks to be neu