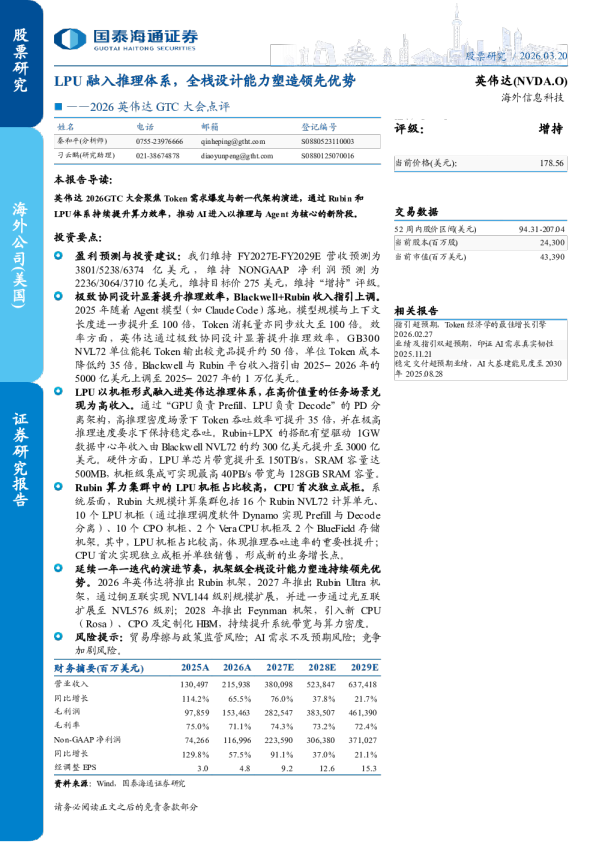

GTC 2026 – 推理王国扩张 GTC 2026 – The Inference Kingdom Expands

Groq LP30, LPX Rack, Attention FFN Disaggregation, Oberon & Kyber Updates,Nvidia's CPO Roadmap, Vera ETL256, CMX & STX Groq LP30、LPX 机架、Attention FFN 解耦、Oberon 与 Kyber 更新、英伟达 CPO 路线图、Vera ETL256、CMX 与 STX At GTC 2026, Nvidia delivered an event packed full of ground breakingannouncements. Nvidia’s pace of innovation is not showing any signs of slowing, as they introduced three entirely new systems this year: Groq LPX, Vera ETL256, andSTX. Also announced were updates to Nvidia’s Kyber rack architecture system, CPOmaking its debut for scale-up networking with the unveiling of the Rubin UltraNVL576 and Feynman NVL1152 multi-rack systems. Early hints on Feynman’sarchitecture was also a key topic. A Jensen callout forInferenceX during the keynotewas a highlight. 在GTC 2026上,英伟达(Nvidia)带来了⼀场充满突破性发布的盛会。英伟达的创新步伐没有表现出任何放缓的迹象,今年他们推出了三款全新的系统:Groq LPX、Vera ETL256和STX。同时发布的还有英伟达Kyber机架架构系统的更新,随着Rubin Ultra NVL576和Feynman NVL1152多机架系统的亮相,CPO(共封装光学)在扩展⽹络领域⾸次登场。关于Feynman架构的早期线索也是⼀个核⼼话题。⻩仁勋在主题演讲中对InferenceX的点名表扬成为了⼀⼤亮点。 This is our GTC 2026 recap, and we will address many of the key questions that havebeen left unanswered by Nvidia. Specifically, we will go through the LPX rack andLP30 chip and explain how attention and feed forward network disaggregation (AFD)works; more details on the various rack architectures behind NVL144, NVL576, andNVL1152 and clarify just how much optics will be inserted as well as the rationalebehind the dense Vera ETL256. The next generation Kyber rack had some big updates and some hidden details. 这是我们的GTC 2026回顾,我们将解答英伟达留下的许多关键问题。具体⽽⾔,我们将深⼊探讨LPX机架和LP30芯⽚,并解释注意⼒机制与前馈⽹络解耦(AFD)的⼯作原理;详细介绍NVL144、NVL576和NVL1152背后的各种机架架构,并阐明光模块的实际接⼊量以及⾼密度Vera ETL256背后的设计逻辑。下⼀代Kyber机架也有⼀些重⼤更新和隐藏细节。 Groq First up is the Groq LPU. One of the most significant recent events in AIinfrastructure was Nvidia’s “acquisition” of Groq. Strictly speaking, Nvidia paid Groq$20B to license their IP and hire most the team. This functions almost as anacquisition, though its structure technically falls short of it being legally considered asone, thereby simplifying or obviating the need for regulatory approvals. Given Nvidia’smarket share, if this transaction were structured as a full acquisition and were put toanti-trust review, such a transaction would likely not go through. The other benefit isthat it avoids a drawn-out transaction closing process. Nvidia got instant access toGroq’s IP and people. This is why, less than four months after the deal was announced,Nvidia already has a system concept that is being integrated into the Vera Rubininference stack. ⾸先是Groq LPU。近期AI基础设施领域最重⼤的事件之⼀就是英伟达对Groq的“收购”。严格来说,英伟达向Groq⽀付了200亿美元,⽤于获得其IP授权并雇佣了⼤部分团队成员。这在功能上⼏乎等同于收购,尽管其结构在技术上并不符合法律意义上的收购,从⽽简化或消除了监管审批的必要性。考虑到英伟达的市场份额,如果这笔交易被构造成完整的收购并提交反垄断审查,很可能⽆法通过。另⼀个好处是它避免了漫⻓的交易交割过程。英伟达⽴即获得了Groq的IP和⼈才。这就是为什么在交易宣布不到四个⽉后,英伟达就已经拥有了⼀个正在整合到Vera Rubin推理堆栈中的系统概念。 Let’s now go through a refresher on the LPU architecture to see how Groq’s LPUcomplements Nvidia’s GPU. For more detailssee our original Groq piece.The premisefrom that piece remains unchanged: the standalone Groq LPU system is noteconomical for serving tokens at scale, but it can serve tokens very quickly which candemand a large market premium. This is the premise behind how LPU fits into a disaggregated decode system. 让我们现在复习⼀下LPU架构,看看Groq的LPU是如何补充英伟达GPU的。更多详情请参阅我们最初关于Groq的⽂章。那篇⽂章中的前提依然没有改变:独⽴的Groq LPU系统在⼤规模提供Token服务⽅⾯并不经济,但它可以极快地提供Token,从⽽获得巨⼤的市场溢价。这就是LPU如何融⼊解耦解码(disaggregateddecode)系统的基础前提。 LPU chipLPU 芯片 Groq’s first and only publicly announced LPU architecture was detailed in their ISCA2020 paper. Unlike typical hardware architectures connecting many general-purposecores, Groq re-organized the architecture into groups of single-purpose unitsconnecting to other groups of different purposes, and they named the groups “slices.”Between functional units are streaming registers, scratchpad SRAM for functionalunits to pass data to each other. Groq opted for single-level scratchpad SRAM insteadof multi-level memory hierarchy to make the hardware execution deterministic. Groq⾸个也是唯⼀公开宣布的LPU架构在其2020年的ISCA论⽂中进⾏了详细阐述。与连接多个通⽤核⼼的典型硬件架构不同,Groq将架构重新组织为若⼲组连接到其他不同⽤途组的单⼀⽤途单元,并将这些组称为“切⽚”。功能单元之间设有流寄存器和暂存SRAM,供功能单元相互传递数据。Groq选择使⽤单级暂存SRAM⽽⾮多级存储层次结构,以确保硬件执⾏的确定性。 Concretely, LPU architecture has VXM slices for vector operations, MEM slices forloading/storing data, SXM slices for tensor shape manipulation, and MXM slices forperforming matrix multiplication. Spatially, the slices are laid out horizontally,allowing the data to stream horizontally. Within a slice, instructions are pumpedvertically across units. Conceptually, LPU resembles a systolic array that pumpsinstructions vertically and data horizontally. 具体⽽⾔,LPU架构包含⽤于向量运算的VXM切⽚、⽤于加载/存储数据的MEM切⽚、⽤于张量形状操作的SXM切⽚,以及⽤于执⾏矩阵乘法的MXM切⽚。在空间布局上,这些切⽚⽔平排列,允许数据⽔平流转。在切⽚内部,指令在各单元之间垂直泵送。从概念上讲,LPU类似于⼀个垂直泵送指令、⽔平泵送数据的脉动阵列。 The data flow and instruction flow design requires fine-grained pipelining to achievehigh performance. Since LPU architecture makes computation deterministic,